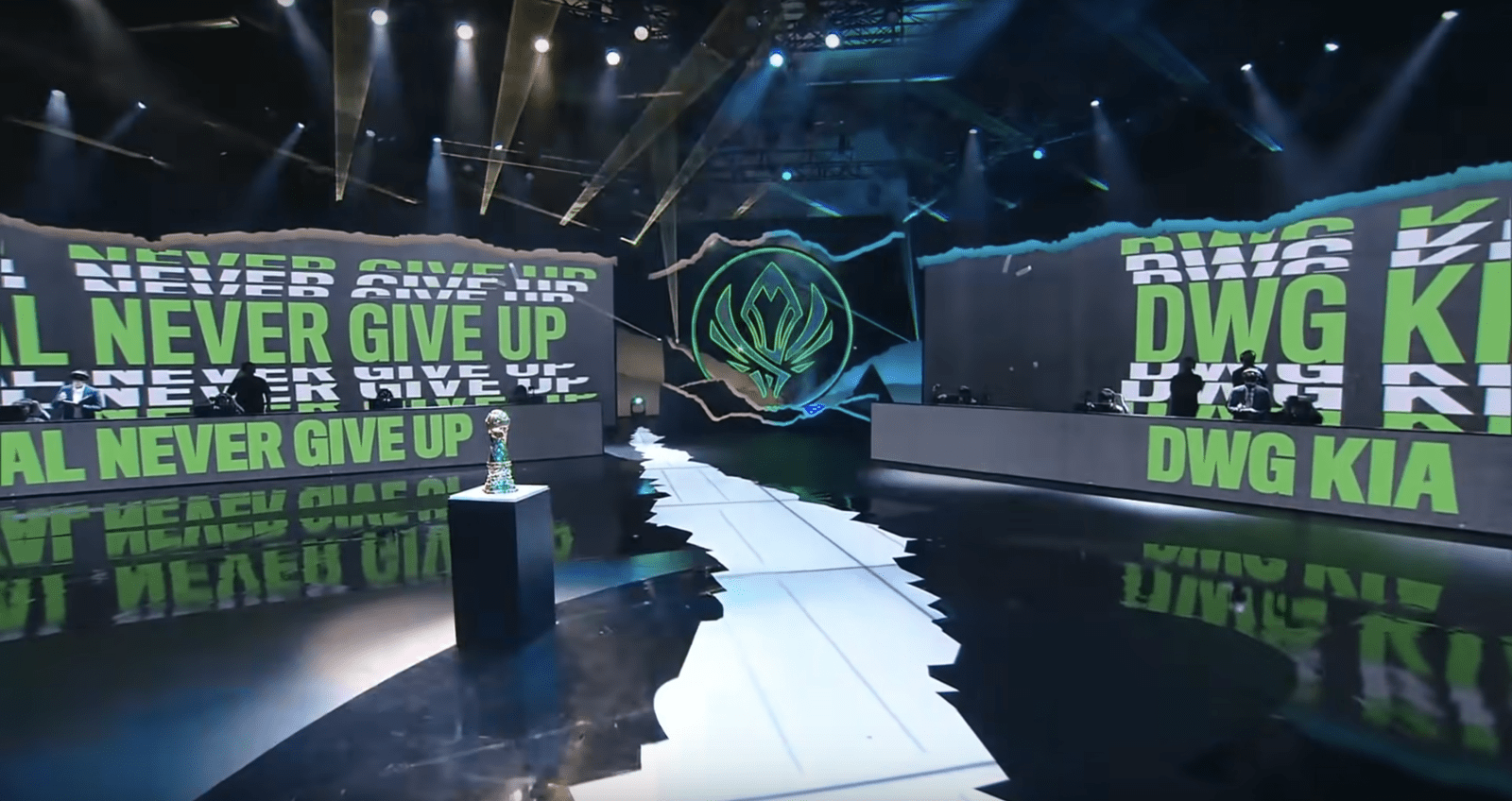

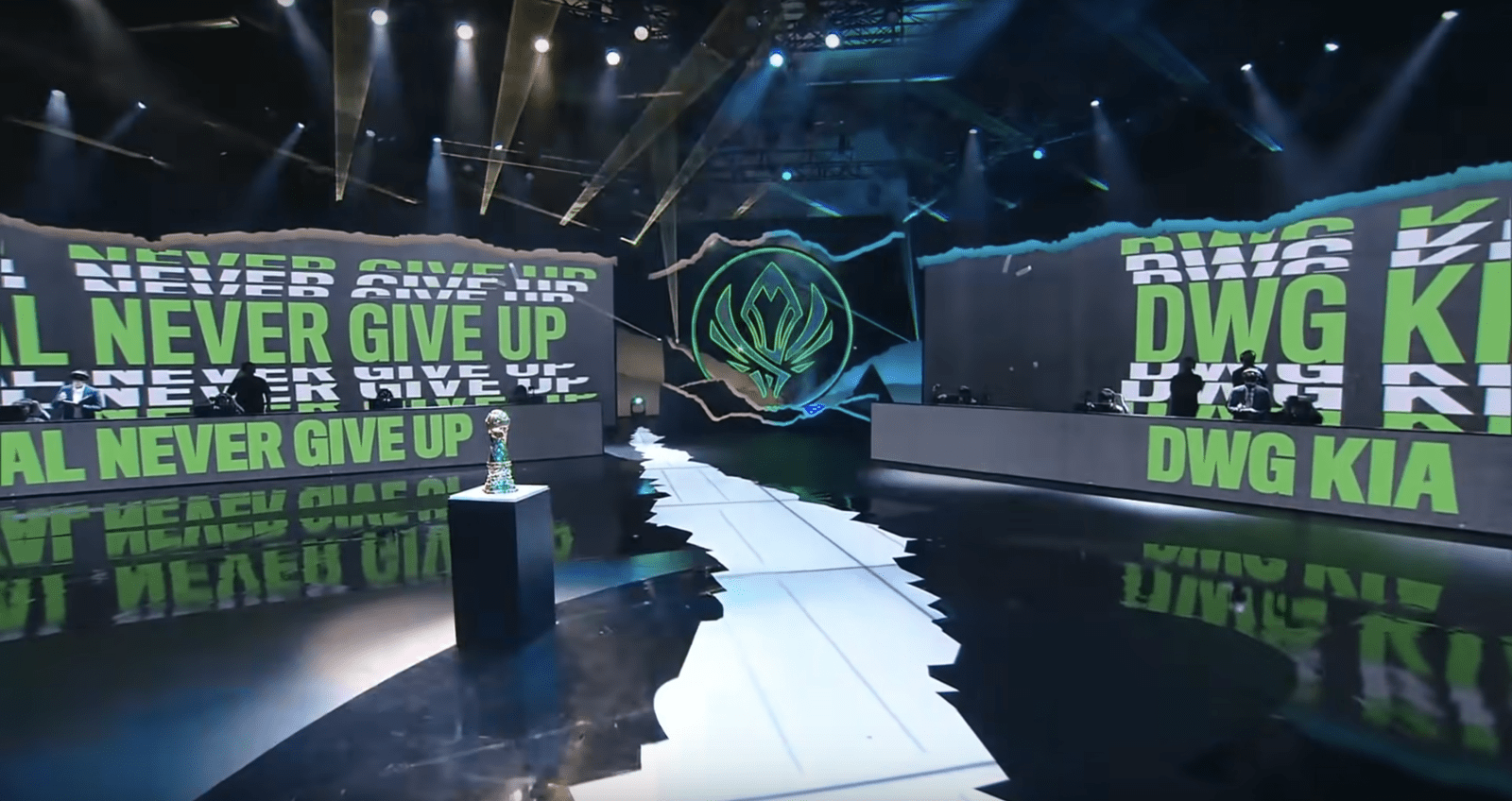

In 2019, Riot Games became the first organization in the world to use JPEG XS compression for a trans-Atlantic remote production, deploying it for the League of Legends World Championship in Paris. Last month, Riot’s Esports Technology Group aimed to take its use of JPEG XS to the next level during the back-to-back League of Legends Mid-Season Invitational (MSI) and Valorant Champions Tour (VCT) Masters Reykjavík events in Iceland — its first in-person events since LoL World Championships in Shanghai in October.

“Originally,” says Scott Adametz, head of Esports Technology Group, Riot Games, “we were planning on this being a test [of JPEG XS] for Iceland; it wasn’t planned to be used for the actual production paths. But the technology worked so well in the test, and we became comfortable enough to decide, the week before the show went on-air, to use it for the live production. XS ended up solving some needs we didn’t even realize we had with regards to transmission delay.”

For many in the industry, the JPEG XS standard represents the next great leap forward in transmission. The codec delivers high-quality compression with ratios up to 10:1 at sub-millisecond latency, and compressed video can be transported using SMPTE ST 2110-22 over WAN (wide-area network).Riot Games’ broadcast team had been using JPEG2000 for several years but has found a wealth of new possibilities for remote production and ultra-fast transmission with JPEG XS, according to Adametz. “This is unlocking a different way to produce content going forward,” he says.

Three Production Hubs Come Together Via JPEG XS, Riot Direct Network

Riot produced both the MSI (May 6-23) and VCT Masters (May 24-30) events using three hubs: a truck onsite in Reykjavík, Riot’s European headquarters in Berlin, and LCS Arena in Los Angeles — all connected via the company’s dedicated Riot Direct network.

The truck on hand at Reykjavík’s Laugardalshöll indoor sports arena produced the main world feed, which is distributed to 15 regions around the globe via AWS public cloud. Meanwhile, the English-language show was produced out of Berlin, and casters called the action from L.A.

To make it easier for distribution partners, Riot sent the world feed from Reykjavík to the nearest hub in the cloud, allowing regional partners to pull locally from their nearest AWS point-of-presence. This allowed all 15 regional partners to bring the world feed seamlessly into their own production facilities, version it, create caster commentary, and insert graphics in real time.

Related content

LAN - WAN - CLOUD with JPEG XS

High quality live production in the LAN, over the WAN and into the CLOUD using JPEG XS

In 2019, Riot Games became the first organization in the world to use JPEG XS compression for a trans-Atlantic remote production, deploying it for the League of Legends World Championship in Paris. Last month, Riot’s Esports Technology Group aimed to take its use of JPEG XS to the next level during the back-to-back League of Legends Mid-Season Invitational (MSI) and Valorant Champions Tour (VCT) Masters Reykjavík events in Iceland — its first in-person events since LoL World Championships in Shanghai in October.

“Originally,” says Scott Adametz, head of Esports Technology Group, Riot Games, “we were planning on this being a test [of JPEG XS] for Iceland; it wasn’t planned to be used for the actual production paths. But the technology worked so well in the test, and we became comfortable enough to decide, the week before the show went on-air, to use it for the live production. XS ended up solving some needs we didn’t even realize we had with regards to transmission delay.”

For many in the industry, the JPEG XS standard represents the next great leap forward in transmission. The codec delivers high-quality compression with ratios up to 10:1 at sub-millisecond latency, and compressed video can be transported using SMPTE ST 2110-22 over WAN (wide-area network).Riot Games’ broadcast team had been using JPEG2000 for several years but has found a wealth of new possibilities for remote production and ultra-fast transmission with JPEG XS, according to Adametz. “This is unlocking a different way to produce content going forward,” he says.

Three Production Hubs Come Together Via JPEG XS, Riot Direct Network

Riot produced both the MSI (May 6-23) and VCT Masters (May 24-30) events using three hubs: a truck onsite in Reykjavík, Riot’s European headquarters in Berlin, and LCS Arena in Los Angeles — all connected via the company’s dedicated Riot Direct network.

The truck on hand at Reykjavík’s Laugardalshöll indoor sports arena produced the main world feed, which is distributed to 15 regions around the globe via AWS public cloud. Meanwhile, the English-language show was produced out of Berlin, and casters called the action from L.A.

To make it easier for distribution partners, Riot sent the world feed from Reykjavík to the nearest hub in the cloud, allowing regional partners to pull locally from their nearest AWS point-of-presence. This allowed all 15 regional partners to bring the world feed seamlessly into their own production facilities, version it, create caster commentary, and insert graphics in real time.

“We’ve been able to set up a triangle of feeds interconnecting three of our studios in near real time [in] Iceland, Los Angeles, and Berlin,” says James Wyld, infrastructure engineer, Riot Esports Technology Group. “Our initial goal was to set up a trial of JPEG XS to get a picture of how it combines with Riot Direct’s network and what kind of reliability we get between studios and between continents. We wanted to answer the question: is this a feasible transmission technology we can implement for future shows, and how far can we take it?”

As proof-of-concept, Riot simply wanted to set up JPEG XS feeds parallel to its primary production paths and compare them. However, the company was so impressed by the stability of JPEG XS from the outset that, just one week before going on-air, it opted to transition the live production.

“Very quickly,” says Wyld, “we found ourselves using it to deliver all of our in-house return feeds to screens within the venue [Iceland] from the English-language production [Berlin]. Since then, we’ve been able to identify more opportunities where the tech can be used as an improvement over some of our other workflows.”

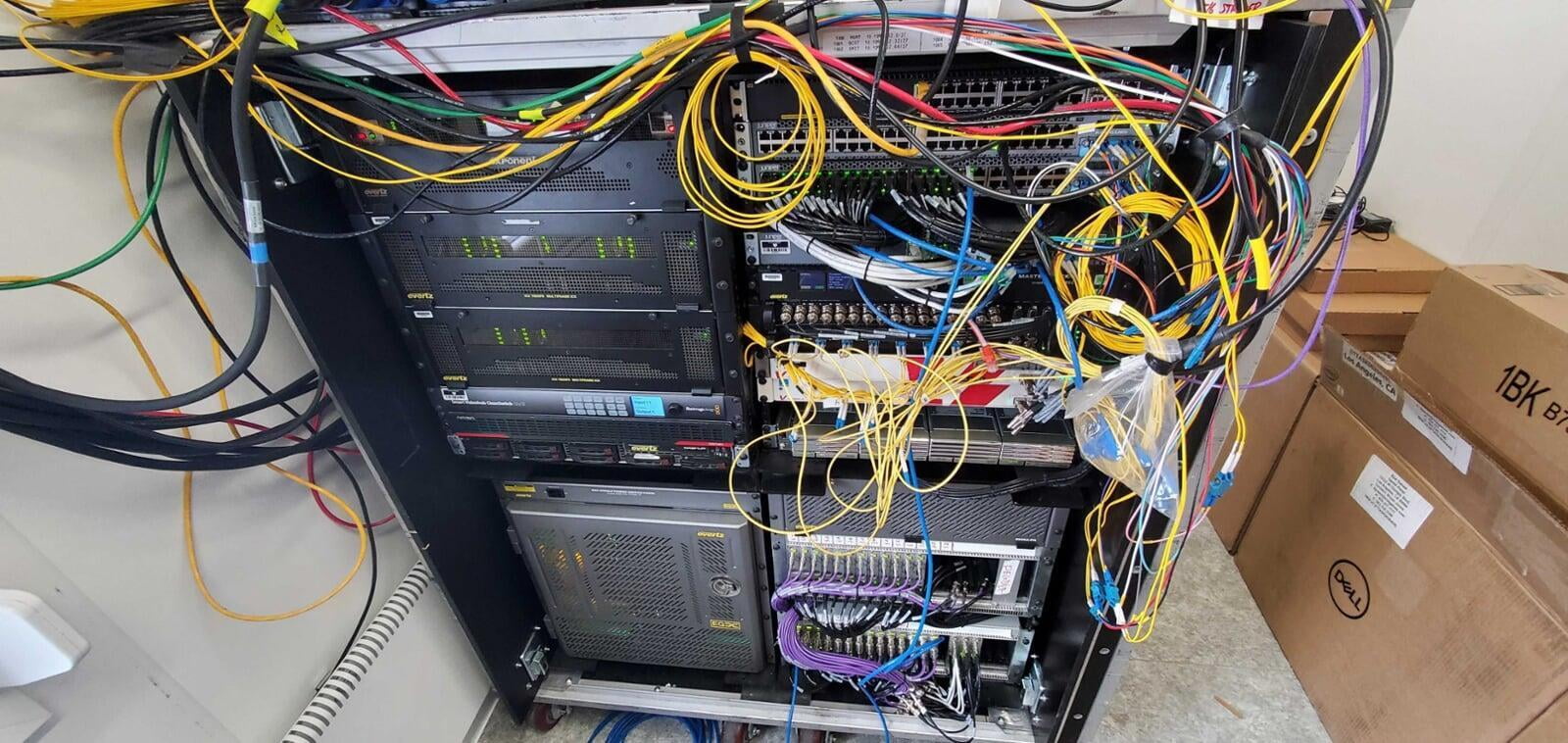

The Riot Direct network connecting the three sites is powered entirely by Cisco NCS hardware, each site equipped with Cisco Nexus 9000 switches controlled by DCNM and deployed in an IP Fabric for Media (IPFM) topology.

Even with only 10 ms of jitter buffer in the transmission paths, Riot’s broadcast team experienced zero missing/dropped frames, thanks to the efficiency of JPEG XS and the Riot Direct network.

Although Riot was running a whopping 72 codecs with 36 possible paths of JPEG XS on its global network, this massive quantity required only four Nevion Virtuoso frames (totaling four rack units).

“We’re able to do all that we’re doing on this show using just four rack units, which is unbelievable,” says Adametz. “And it’s not just smaller; it’s more powerful and more reliable than anything we’ve done in the past.”

The Most Data-Hungry Event We’ve Ever Done

With each of the three production hubs pumping out 3-4 Gbps, the MSI and VCL Masters events were “by far, the most data-hungry event that we’ve ever done,” says Adametz.

In all, Riot transmitted a mind-blowing 4.235 PB between the three sites through the MSI Finals (the previous record was set at Worlds 2020 with 3.2 PB). To put that in perspective, 4.235 PB is the equivalent of 14 years of 24/7 Full HD video recording or 16,940 digital photos captured per day over your entire life. The movie Avatar needed about 1 PB of storage to render those graphics; Riot transferred more than four times that amount in six weeks.

“All credit to the brilliant engineers around the world that support the Esports Technology Group,” says Adametz. “We could not have done this without their investment of time and energy in making this work. This is a group of really talented engineers pushing the boundaries of what we thought was possible. And that’s what Riot is all about.”

Controlling a Switcher From 4,300 Miles Away

In addition to producing data-heavy, multiple-site broadcasts using JPEG XS, Riot conducted some R&D experiments during the Iceland shows in conjunction with Nevion, Grass Valley, Riedel, and TAG VS. Thanks to the low latency of JPEG XS, the company was able to remotely control broadcast equipment thousands of miles from Reykjavík without noticeable delay for the operator. Specifically, a TD located in Reykjavík controlled a Grass Valley Kayenne K-Frame switcher in Los Angeles with just a third-of-a-second latency.

“TD’s calling cams in real time is possible because of XS,” says Maxwell Trauss, broadcast tech producer, Riot Esports Technology Group. “Even one or two seconds [of latency] breaks the flow of the call. XS makes that possible remotely.

“The real benefit of XS,” he continues, “is that it can exist inside the 2110 standard. We’ve been using J2K with great success for years [see here, here, and here, for JPEG2000 examples], but now XS is even faster. We’re getting closer and closer to the speed of the network being the only limiting factor in how quickly we can get video around. Having the speed of XS makes it much easier for production staff to remote-control a switcher and makes calling cameras from far away feel more natural.”

Looking Ahead: Remote Operation, 4K, and Continuing To Embrace the Cloud

According to Adametz, Riot is currently planning its first remotely switched broadcast using JPEG XS with the TD and the control panel thousands of miles from the physical switcher frame. “It’s possible only because XS is so fast,” he says. “You make a cut, and it’s so quick a round trip that it’s equivalent to being right in the room even though you may be thousands of miles away. That’s the power of XS: it’s reliable, it looks amazing, and it’s amazingly fast.”

Currently, Riot produces its broadcasts in 1080p60, but, with JPEG XS, Adametz says, the Esports Technology Group is looking to boost the broadcasts to 4K in the near future.

“We’re also looking forward to the UHD support, which we’ll need for future games,” he explains. “We want to be early with 4K and 8K is just around the corner because we can render [gameplay] in any resolution. We don’t have to change the codec; we can just keep the same codec and up the data rate.”

Adametz also believes that JPEG XS will allow Riot to continue to embrace cloud-based workflows (Riot created a fully cloud-based virtualized production workflow for live broadcasts during the pandemic) without having to sacrifice the power of high-end broadcast hardware.

“We’ve realized the power of moving production into the cloud but not necessarily sacrificing the enterprise-grade gear,” he adds. “For us, controlling a switcher remotely solves a lot of the problems that [cloud-based software] was solving for us but without any of the limitations. When we do the remote control of a full-on K-Frame, we’re getting all the functionality and benefits of being anywhere, but we’re still getting the power of a Grass Valley switcher at the core of our shows.

“That’s the model we’re going for: not cutting our resources, just allowing them to be remotely controlled from anywhere in the world.”